Why the Twitter road crash is more serious than you think

Plus the growing evidence that AI chat search is far from ready for prime time, and some great reads on both how ChatGPT actually works and what’s behind “hot, lonely women” catfishing scams

The muting of China’s dissident Tweeters

Elon has been suffering visibility problems on Twitter — ones he has seemingly solved. Many of us are finding that the reach of our Tweets has collapsed. And it turns out that protesters in China are seeing the same — and worse:

More than 30 prominent Chinese dissidents and activists have experienced similar visibility problems on Twitter in recent months, according to interviews with nine of them and screenshots of search results. The activists’ accounts did not appear after a search of their Twitter names, the screenshots showed, though impostor accounts turned up. Three of the dissidents said their accounts had also been suspended with no warning and reinstated only after appeals.

This is a far cry from the days of the Arab Spring, when the Obama State department, under Hilary Clinton, called Twitter people in. Why? To ask them to move scheduled maintenance to avoid impacting planned protests (as reported in Nick Bilton’s Hatching Twitter). The point of reference is important because we are at a potentially pivotal moment in China:

The issues have also meant that leading Chinese voices on Twitter were muffled at a crucial political moment, even though Mr. Musk has championed free speech. In November, protesters in dozens of Chinese cities objected to President Xi Jinping’s restrictive “zero Covid” policies, in some of the most widespread demonstrations in a generation.

In theory, this might be a good thing in the medium term. It should encourage dissidents to move to decentralised systems like Mastodon, which it will be harder for Chinese authorities to block or censor. They might well end up in a “whack-a-mole” situation, trying to shut down or block instances as they pop up.

But getting both the active voices and their engaged audience there will take time, and while that happens, Musk is helping stifle dissent. Something something free speech something something, eh Elon?

Oh, and the Twitter API changes have been delayed again.

UK tabloids make ChatGPT racist

Somebody decided to “interview” publications via ChatGPT

Unfortunately certain publications turned ChatGPT into something between a drunk uncle and a paranoid, xenophobic lunatic.

Go on. Guess which ones.

[via Peter at Media Voices]

Bing’s AI version is very much a beta

As more people get access to the AI-powered Bing chat interface (I have access now) that uses a variant of ChatGPT, it’s becoming clearer that it’s a way from being ready for mainstream use:

- Turns out that Bing’s version of ChatGPT can be downright rude…

- Even more examples of Bing having an emotional reaction to users.

- And it made loads of mistakes even in its public demo.

Again, this technology could be transformative. And it will get better. But it is not ready for prime time yet.

How ChatGPT really works.

ChatGPT is basically making informed guesses as to what’s the most likely word to come next in a sentence. Stephen Wolfram has written a surprisingly accessible explainer about what’s happening behind the scenes:

And the remarkable thing is that when ChatGPT does something like write an essay what it’s essentially doing is just asking over and over again “given the text so far, what should the next word be?”—and each time adding a word. (More precisely, as I’ll explain, it’s adding a “token”, which could be just a part of a word, which is why it can sometimes “make up new words”.)

It also has some element of randomness to make what it writes more interesting:

Because for some reason—that maybe one day we’ll have a scientific-style understanding of—if we always pick the highest-ranked word, we’ll typically get a very “flat” essay, that never seems to “show any creativity” (and even sometimes repeats word for word). But if sometimes (at random) we pick lower-ranked words, we get a “more interesting” essay. The fact that there’s randomness here means that if we use the same prompt multiple times, we’re likely to get different essays each time.

This is fascinating, and gives at least some insight into what’s happening — and the inherent limits of that process. And that should, in turn, help us think about where it makes sense to use this technology (highly formulaic pieces) and where it’s not yet going to be a threat.

Quickies

- 👻Ghost has been doing a lot of work on email deliverability of late – they added list cleaning, and now they’ve built a self-support system if emails aren’t arriving.

- 🔎 Useful post from Barry, rounding up the best approaches to SEO for different styles of paywall.

- 🐘 Ivory – the iOS Mastodon client I wrote about a couple of weeks ago — is getting better, fast. If you have an iPhone, for the moment, this is the best way to experience Mastodon.

- 🫂 Some useful guidance from the Headlines Network about reporting on traumatic breaking news.

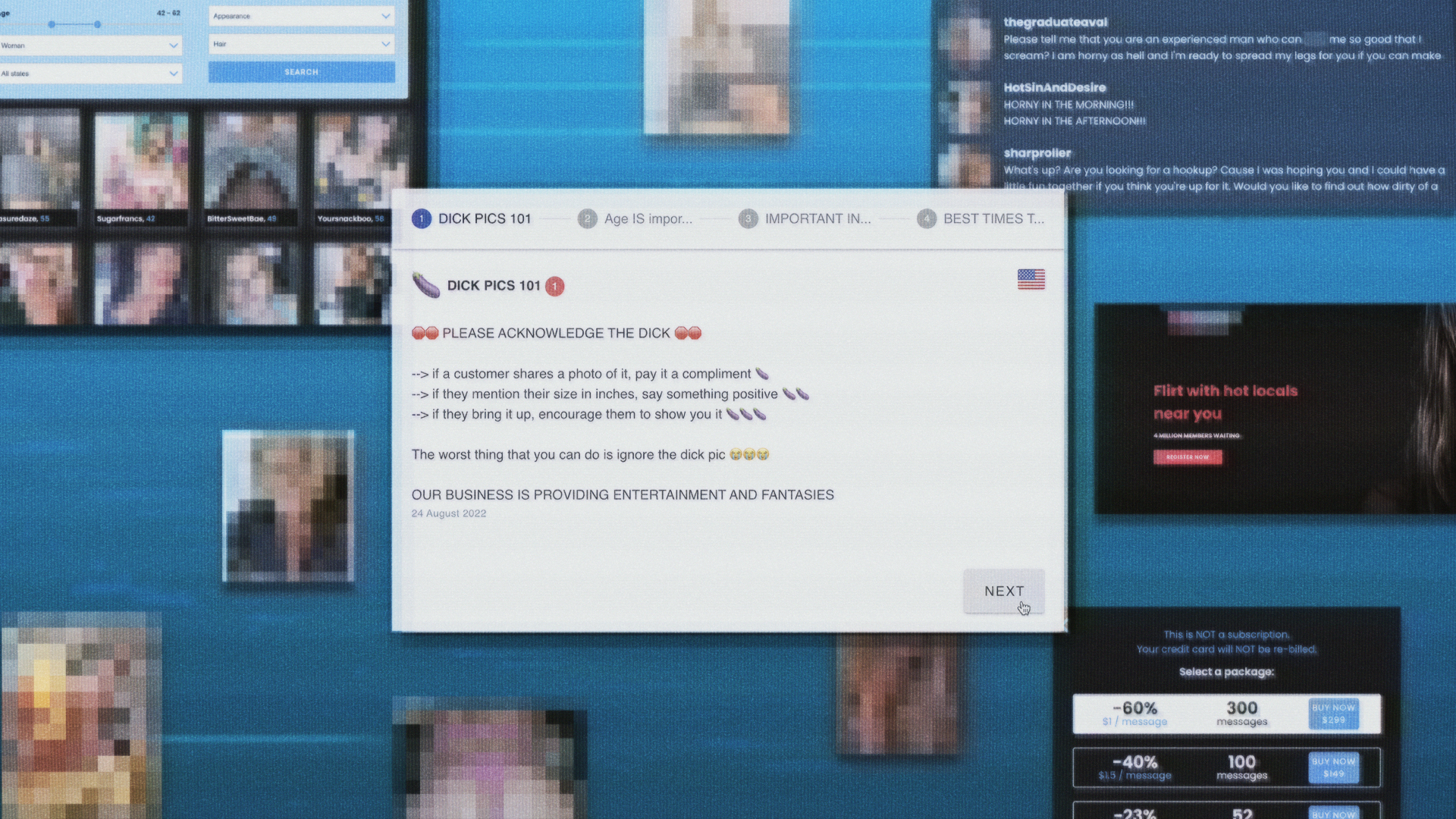

Hot journalism near you

Ever wonder what’s going on behind those “hot women near you” links that lurk in the chumboxes under articles on some websites? Well, you will be entirely unsurprised to discover it’s basically a catfishing scan — but how it operates is fantastic reading:

Great work from VICE World News and the Bureau of Investigative Journalism, found via Charles Arthur.