The New York Times battles trolls with AI

I’ve spent a significant chunk of today reading about AI, for another writing project. That led me back to a piece, about the New York Times harnessing AI system called Perspective to help with comment moderation:

“What Moderator really is about is scale,” said Times community editor Bassey Etim, who oversees a core team of 14 moderators; he is project manager for the new content management system for the Times’ moderators, which uses Perspective. He said moderators won’t be replaced by the software, but that their jobs would be augmented.

So, the idea is that the Moderator system will flag up problematic (or apparently so) comments, for human appraisal. That makes a world of sense. Newspapers have a very specific problem with comments: scale. That manifests in two ways:

- Greater scale gives more incentive for poor behaviour in comments. Much trolling is attention-derived, and the more attention you stand to win, the more inclined you become to going after it. This equation applies.

- Greater scale brings greater moderation costs – to the point where the cost/benefit analysis starts to look a little shonky.

At first glance – this is a good move. If the costs of deploying the system reduce the need for human moderation, and make it more efficient, it sorts out that cost/benefit equation.

I’ve made a note in my dairy to check back in a year, and see how it’s going…

Getting Perspective

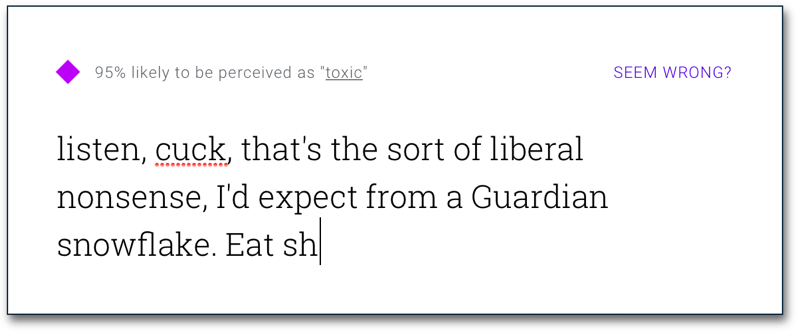

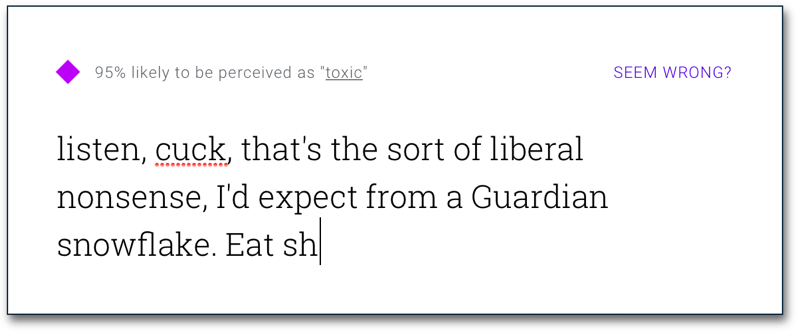

The actual system at play is Perspective, which is also working with The Economist and The Guardian both organisations which have shown a strong commitment to reader interaction over the years. Perspective opened up its API to partners earlier in the year. You can try it out for yourself, by typing in a comment (or copy/pasting one) and seeing the result:

Everything I tried that was trollish rated in the mid-80s and up, which gave a clear threshold for moderation and/or review. I’m sure this will cause some commenters to work around it, trying atypical phraseology or word use but, in theory, with machine learning at work, the system should be able to catch up with this.

One to watch.